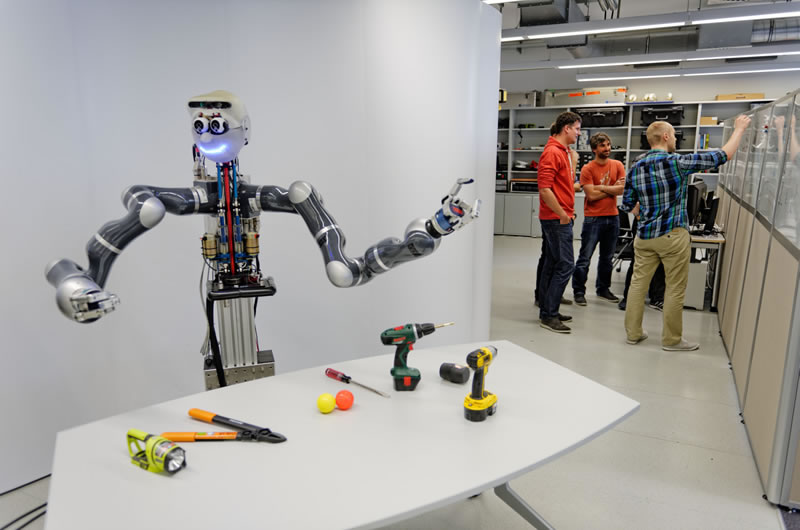

The Interactive Perception group is part of the Autonomous Motion Department at the Max Planck Institute for Intelligent Systems.

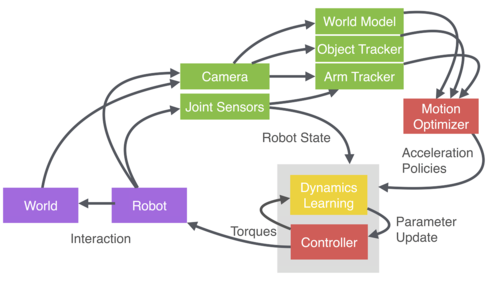

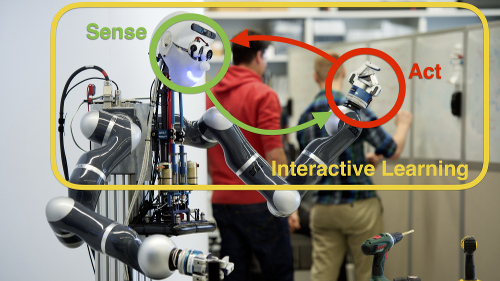

Research in Interactive Perception aims to understand the key principles behind the autonomy, reactivity, and robustness of biological systems when physically interacting with complex, dynamic, real-world environments. We take a synthetic approach by developing fundamental methods and algorithms that are then implemented and analyzed on real robotic systems.

We recognize purposeful interaction with the environment as one of these key principles. Traditionally, it has been avoided as much as possible to seemingly simplify the problem of autonomous grasping and manipulation. Yet, physical interaction with the environment is central to robustness (i) in manipulation by exploiting contact constraints and (ii) in perception by generating a rich, sensory signal that would otherwise not be present.

We also recognize system building and their organization in perception-action loops as another key principle. And finally, we aim to endow a robot with the key ability of autonomous learning through interaction.

News

| Aug 1st | From September 1st, Jeannette will become Assistant Professor at Stanford. She will remain affiliated as a guest researcher with the MPI. |

| Jun 14th | Our paper On the relevance of grasp metrics for predicting grasp success got accepted at IROS 2017. Preprint coming soon! |

| Jun 9th | Our survey on Interactive perception got accepted at the IEEE Transactions of Robotics. We would like to thank the anonymous reviewers for their thoughtful comments and the cited authors for their constructive feedback! Checkout the preprint! |

| Jun 1st | Our paper on Probabilistic Articulated Real-Time Tracking for Robot Manipulation was finalist for the Best Robotic Vision paper at the ICRA 2017. Congrats to Cristina, Jan and Manuel for this awesome recognition of their research! |

| Jun 1st | Cristina and Jeannette received the Best Reviewer Award at ICRA 2017. A big thanks to the CEB for this recognition! |

| May 29th | We publicly released open-source code and data sets on Bayesian articulated object tracking. Check out the project page on github! |

| April 28th | Jeannette hosted a local networking event for female researchers from Tübingen. It was organized through the network LeadersLikeHer by Enkelejda Kasneci. It was great having you! |

Collaborators

We are fortunate to be working with great colleagues and researchers at the Max Planck Institute (MPI) for Intelligent Systems, Tübingen, as well as from other international research institutions.

Collaborators at MPI Tübingen

- Franziska Meier, Autonomous Motion Department

- Ludovic Righetti, Movement Generation and Control Group

- Alexander Herzog, Autonomous Motion Department

- Miroslav Bogdanovic, Movement Generation and Control

- Sebastian Trimpe, Autonomous Motion Department

- Alonso Marco Valle, Autonomous Motion Department

- Jim Mainprice, Autonomous Motion Department

- Philipp Hennig, Probabilistic Numerics Group

- Stefan Schaal, Autonomous Motion Department

- Felix Grimminger, Autonomous Motion Department

- Vincent Berenz, Autonomous Motion Department

External collaborators

- Danica Kragic, Professor, Royal Institute of Technology, Sweden

- Gaurav Sukhatme, Professor, USC, CA, USA

- Oliver Brock, Professor, TU Berlin, Germany

- Marc Toussaint, Professor, University of Stuttgart, Germany

- Nathan Ratliff, CEO, Lula Robotics, WA, USA

- Matthias Bethge, Professor, University of Tübingen, Germany

- Tamim Asfour, Professor, Karlsruhe Institute of Technology, Germany

- Antonio Morales, Professor, Universitat Jaume I, Spain

Alumni

Former members of our group.

- Jan Issac (Research Engineer until 2017), now: Senior Software Engineer at NVIDIA

- Cristina Garcia-Cifuentes (PostDoc until 2017), now: Research Engineer at Amazon Research

- Anna Deichler (Internship, 2017)

- Carlos Rubert (Internship, 2016), now: PhD student at UJI

- Ke Wang (Master Thesis, 2016), now: PhD Student at Imperial College London

- Andrea Bajcsy (Internship, 2016), now: PhD student at UC Berkeley

- Alina Kloss (Master thesis, 2015), now: PhD student with us

- Felix Widmaier (Master thesis, 2015)

- Thibault Portigliatti (Internship 2015), now: Student at ENSTA Paris Tech

- Alonso Marco Valle (Master thesis, 2015), now: PhD student at AMD

- Holger Kaden (Diploma thesis, 2014)

- Sophie Laturnus (Bachelor thesis, 2014)

- Claudia Pfreundt (Bachelor thesis, 2014)

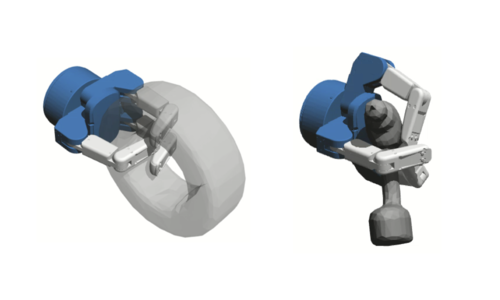

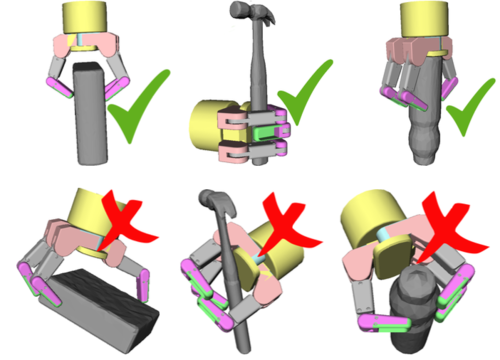

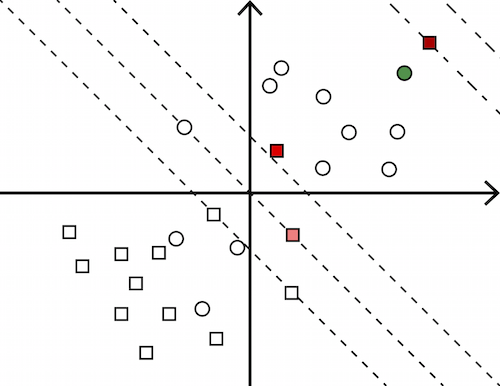

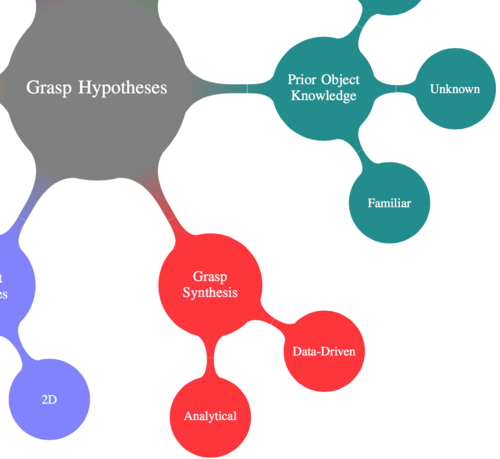

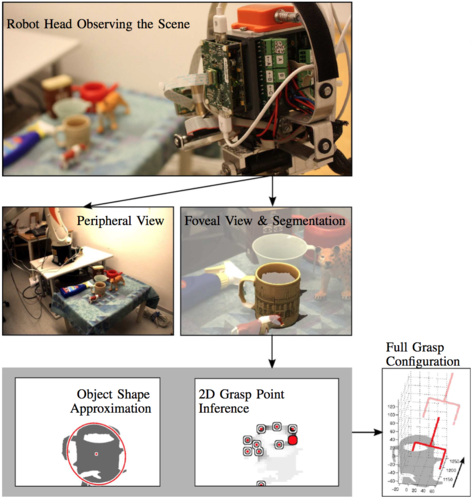

Learning to Grasp

Traditionally, the problem of robotic grasping has been formalized under the assumption of perfect knowledge on the object, robot hand and their relative pose. Simplifying assumptions were made on contact models, hand kinematics and capabilities or the structure of the environment. While this allows elegant solutions to multi-contact planning, many of these assumptions do not translate well into the real world that is riddled by uncertainty.

We have worked on the problem of how a robot can learn how to grasp when only partial and noisy information is available on the object, robot hand and their relative pose. We proposed different feature representations, learning mechanisms and training data.

Research projects and papers:

Learning to Grasp from Big Data

Template-Based Learning of Model Free Grasping

Data-Driven Grasp Synthesis - A Survey

![]()

Visual Tracking

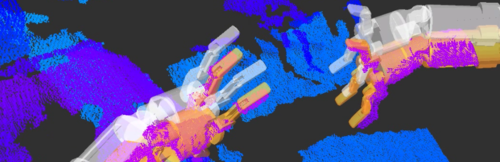

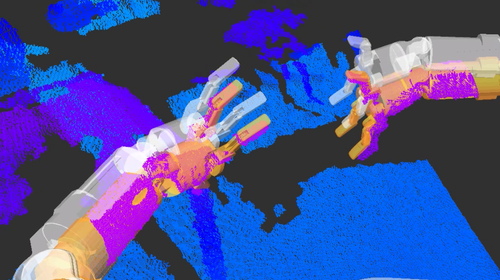

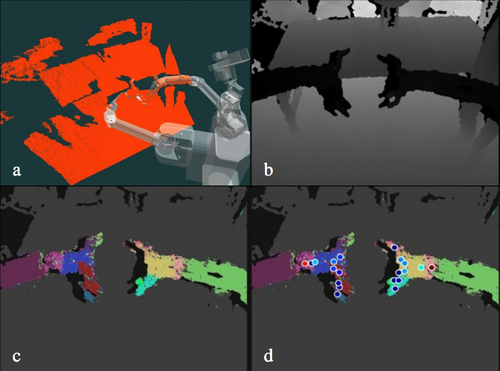

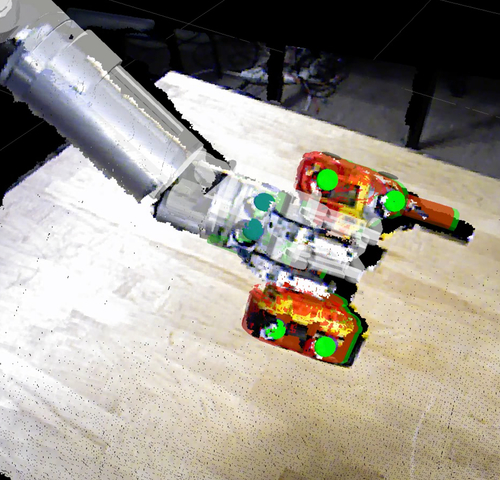

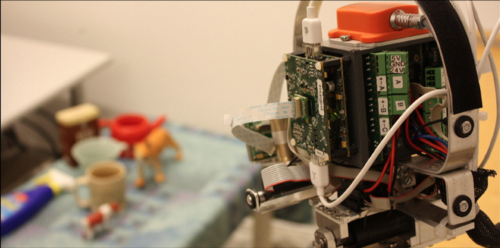

One of the crucial capabilities required for autonomous manipulation and grasping is hand-eye coordination. It relies on continuous feedback on the location of robot hand and object during manipulation. We develop principled methods that endow a robot with this critical ability that address the following challenges: strong and long-term occlusions, high-dimensional measurements and state spaces, real-time demands and delays between sensor streams of different modalities. We have also released data sets to benchmark different approaches towards object and robot arm tracking.

Research projects:

Probabilistic Object and Manipulator Tracking

Robot Arm Pose Estimation as a Learning Problem

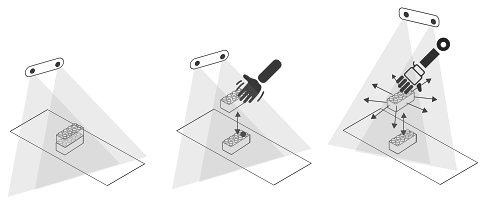

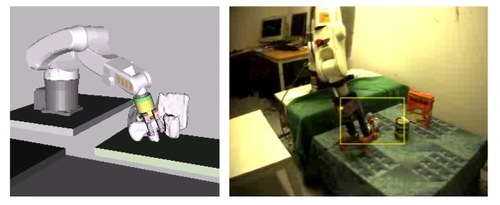

Vision-based Control and Robotic Systems

Using visual sensory data directly as feedback in a controller is a challenging problem. Compared to traditional sensors around which control loops are usually closed, vision sensor produce high-dimensional, noisy data that reflects only partial information about the environment. Furthermore, it is slow and may have significant delay.

Given all these challenges, we investigate how we can robustly close control loops around vision sensors and evaluate it extensively on real robotic platforms.

Research projects and papers:

Real-Time Perception meets Reactive Motion Generation

Autonomous Robotic Manipulation

Task-based Grasp Adaptation

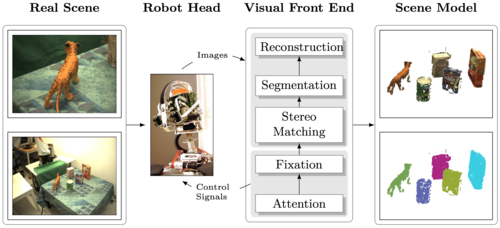

Interactive Perception

A robot has different means to actively explore and better understand its environment. It can look around and fixate on interesting areas or it can ask someone for more information. Physical interaction is another essential means to explore and understand the environment. Feedback received in this manner reveals informative sensory signals that would otherwise not be present and are especially important for grasping and manipulation.

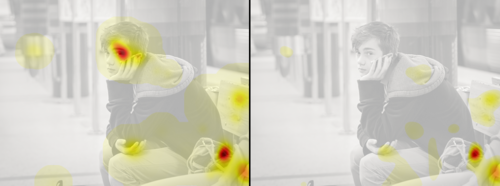

We have worked on problems where the robot augments visual data with tactile feedback to better understand the spatial structure of the environment and objects. We have also worked on learning top-down saliency.

Research projects and papers:

Interactive Perception

Global Object Shape Reconstruction by Fusing Visual and Tactile Data

Modeling Top-down Saliency for Visual Object Search

Interactive Perception: Leveraging Action in Perception and Perception in Action

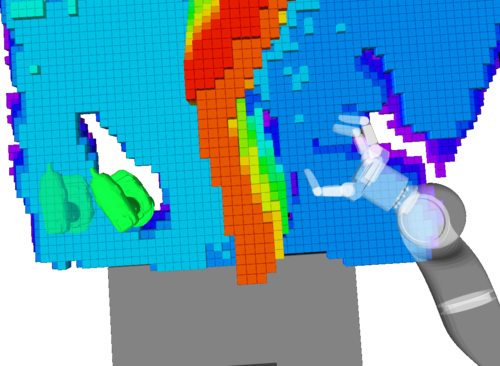

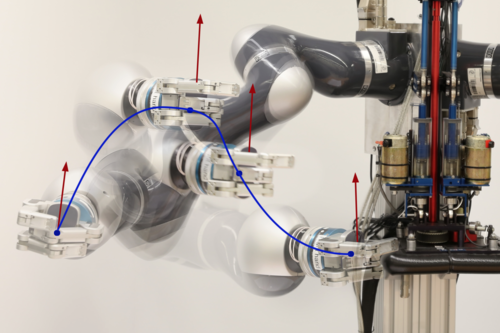

Real-time Perception meets Reactive Motion Generation

We show our real-time perception methods integrated with reactive motion generation on a real robotic platform performing manipulation tasks. For details, check out the project here!

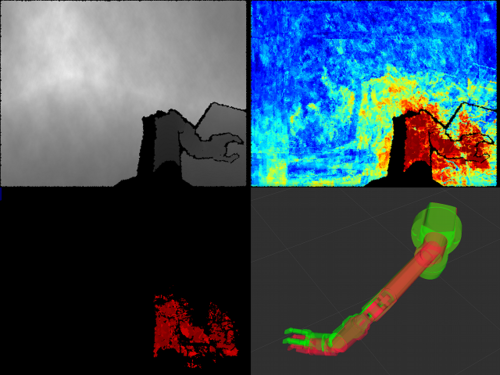

Robust Probabilistic Robot Arm Tracking

We propose probabilistic articulated real-time tracking for robot manipulation. For details, check out the paper.

This video visualizes the performance given different sensory input to estimate the pose and joint configuration of a robot arm. Perfect performance is achieved if the colored overlay matches the arm in the image.

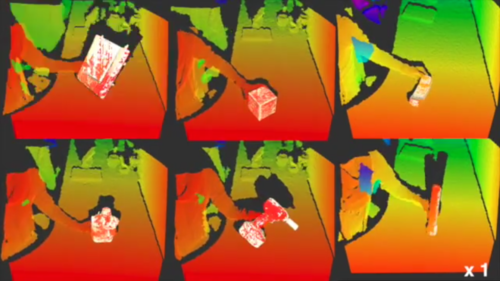

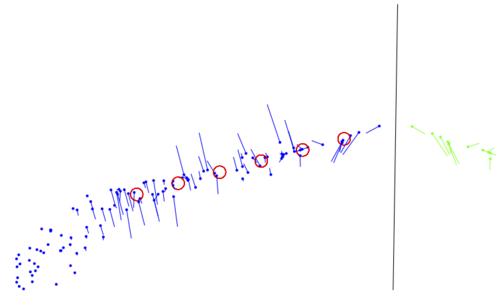

Robust Probabilistic Object Tracking

We developed a set of methods that is robust to strong and long terms occlusions and noisy, high-dimensional measurements. The following video visualizes our object tracking method for robust visual tracking under strong occlusions that is based on a particle filter.

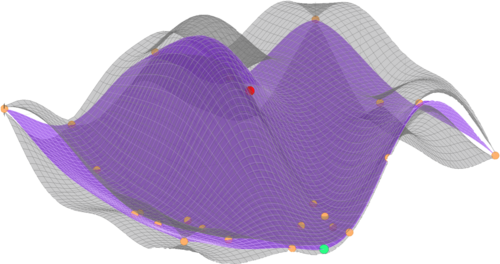

Dual Execution of Optimized Contact Interaction Trajectories

This video showcases a method which optimizes trajectories that are in contact with the environment to exploit these constraints for more robust reaching of a given target. It re-plans these trajectories online using force feedback.

Probabilistic Real-Time Tracking

We developed a comprehensive suite of robust, real-time visual tracking methods. More details are provided on the project page.

We released our methods as open source code in the Bayesian object tracking project.

We provide an easy entry point on our getting-started page.

Data Sets

We also provide data sets that allow quantitative evaluation of alternative methods. They contain real depth images from RGB-D cameras and high-quality ground truth annotations collected with a VICON motion capture system.

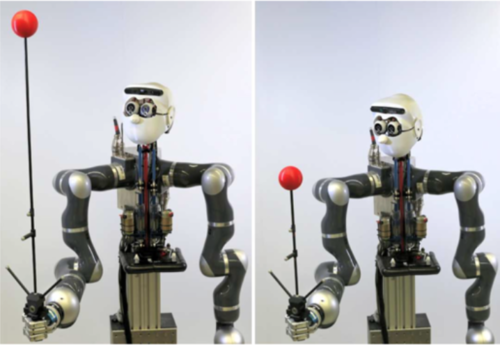

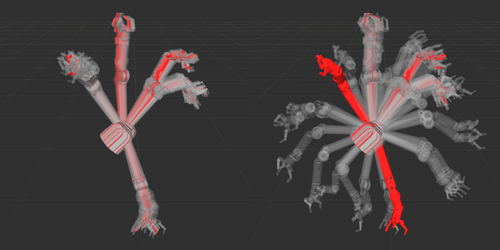

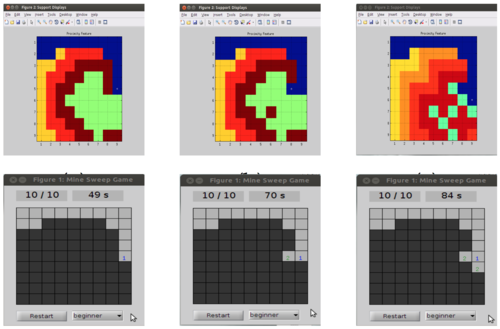

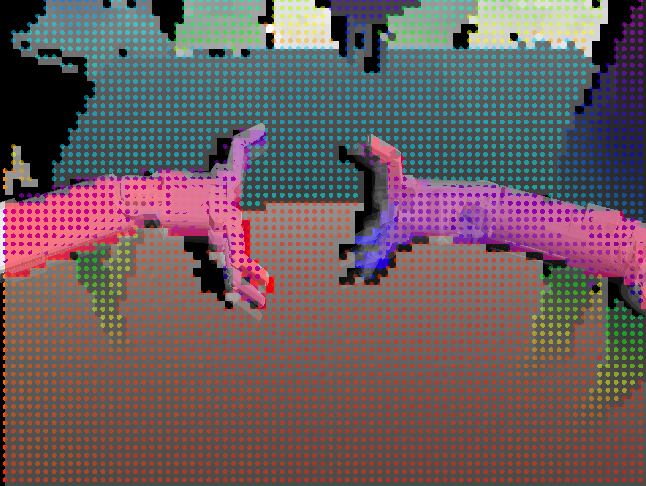

Robot Arm Tracking

The below pictures shows three samples of the data set that were recorded on our robot Apollo. Sequences contain situations with fast to slow robot arm as well as camera motion and none or very severe, long-term occlusions.

![]()

For downloading the data set and further details we refer to the github pages.

Object Tracking

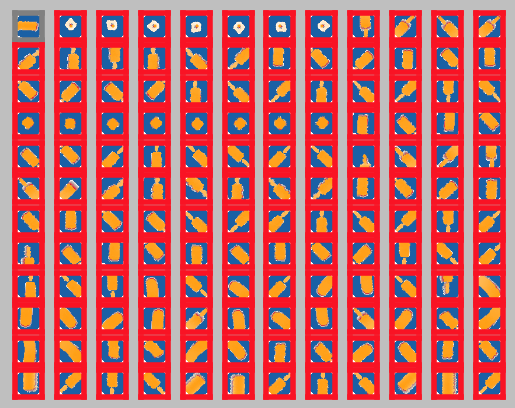

The below picture shows each object that is contained in the data set.

![]()

For downloading the data set and further details we refer to the github pages.

Leveraging Big Data for Grasp Planning

We recently created a database containing over 700.000 data points for learning how to grasp given only partial sensory data. For more details, check out the project page. Please download the data from here!. On the same page, you find code to query the database as well as a docker image providing all the necessary libraries.

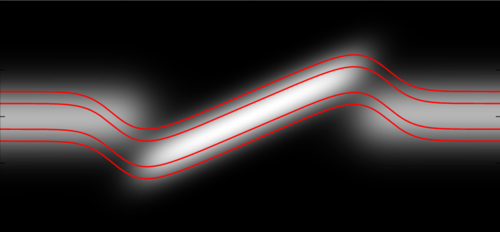

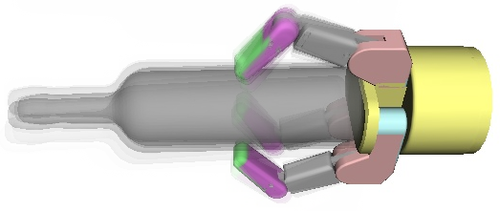

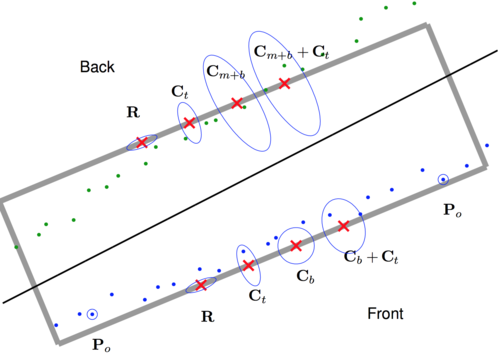

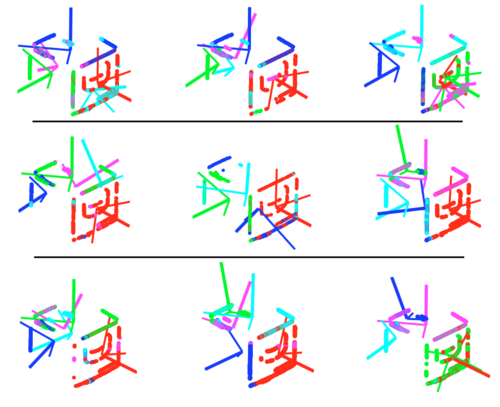

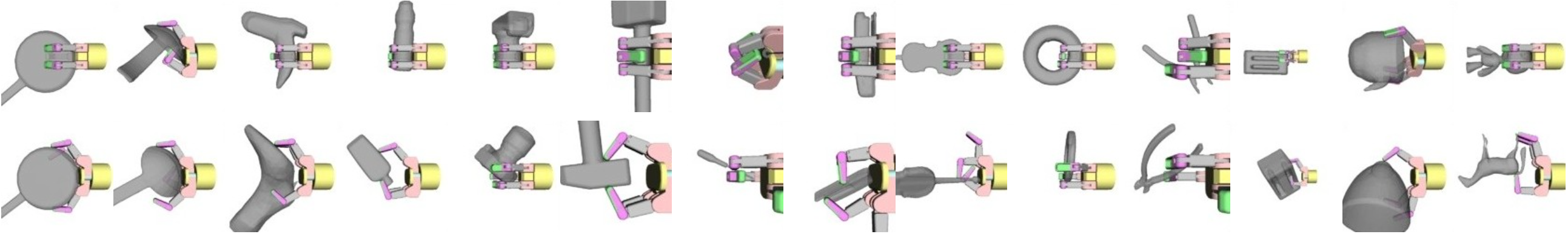

Robot Arm Pose Estimation through Pixel-Wise Part Classification

![]()

We proposed different learning approaches towards hand-eye manipulation. For more details, check out the project page. For generating simulated data for the paper on arm pose estimation, we used a very realistic kinect simulator. The code for this simulator can be found here.

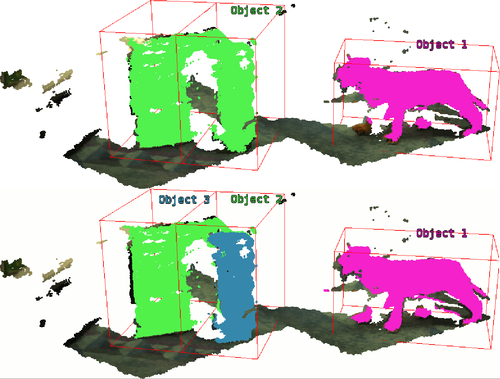

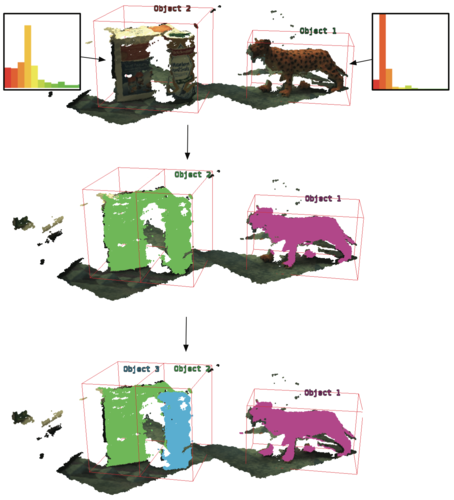

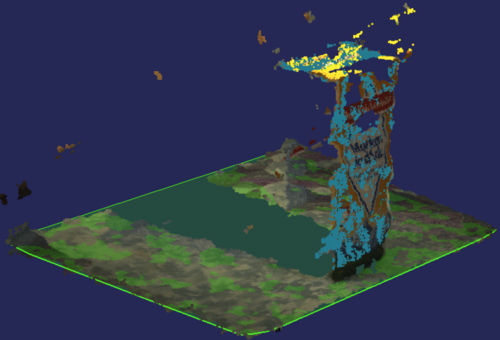

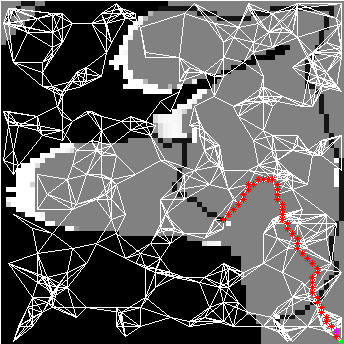

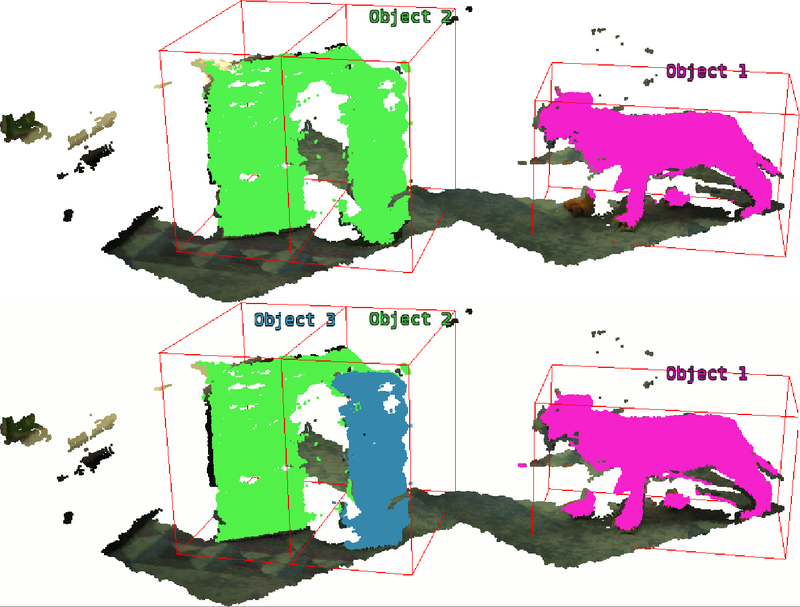

Active Realtime Segmentation

This package implements a real-time active 3D scene segmentation. It is based on an implementation of Belief Propagation on the GPU. Example code for segmenting offline images and of images being published on ros topics is available in the package active_realtime_segmentation.

Related to this object_segmentation_gui implements an rviz plugin for interactive segmentation using the real-time segmentation method from above.

rgbd_assembler provides a helper package porting RGB information from the wide-field cameras of the PR2 to its monochrome narrow-field cameras through 3D reconstruction.

The package fast_plane_detection extract the dominant plane in a scene in real-time. It is a submodule from the above mentioned segmentation module.

Mind the Gap - Robotic Grasping under Incomplete Observation

In the project on 3D object reconstruction fusing visual and tactile data, we tested how well we can predict the global shape of an object when assuming that they are symmetric. We tested the proposed method on the data that can be downloaded here

The code associated with this data can be found here.

Xenomai and RTNet Interface for Kuka LBR IV Arms

Our robot Apollo consists of two Kuka light-weight arms that each require a communication link to a remote machine which can respond in 1ms. We developed an adaptation of the Kuka-provided FRI communication interface to Xenomai+RTNet which can be downloaded here.

Facial Expression Kit

We use a modified iCub facial expression kit for our robot Apollo. You can find a catkin wrapper to control the iCub facial expression kit here.

Our research is funded by the Max Planck Society, the German Research Foundation (DFG) through the CRC on Robust Vision and by the Max Planck ETH Center for Learning Systems.

Collaborators

We are fortunate to be working with great colleagues and researchers at the Max Planck Institute (MPI) for Intelligent Systems, Tübingen, as well as from other international research institutions.

Collaborators at MPI Tübingen

- Franziska Meier, Autonomous Motion Department

- Ludovic Righetti, Movement Generation and Control Group

- Alexander Herzog, Autonomous Motion Department

- Miroslav Bogdanovic, Movement Generation and Control

- Sebastian Trimpe, Autonomous Motion Department

- Alonso Marco Valle, Autonomous Motion Department

- Jim Mainprice, Autonomous Motion Department

- Philipp Hennig, Probabilistic Numerics Group

- Stefan Schaal, Autonomous Motion Department

- Felix Grimminger, Autonomous Motion Department

- Vincent Berenz, Autonomous Motion Department

External collaborators

- Danica Kragic, Professor, Royal Institute of Technology, Sweden

- Gaurav Sukhatme, Professor, USC, CA, USA

- Oliver Brock, Professor, TU Berlin, Germany

- Marc Toussaint, Professor, University of Stuttgart, Germany

- Nathan Ratliff, CEO, Lula Robotics, WA, USA

- Matthias Bethge, Professor, University of Tübingen, Germany

- Tamim Asfour, Professor, Karlsruhe Institute of Technology, Germany

- Antonio Morales, Professor, Universitat Jaume I, Spain

Alumni

Former members of our group.

- Jan Issac (Research Engineer until 2017), now: Senior Software Engineer at NVIDIA

- Cristina Garcia-Cifuentes (PostDoc until 2017), now: Research Engineer at Amazon Research

- Anna Deichler (Internship, 2017)

- Carlos Rubert (Internship, 2016), now: PhD student at UJI

- Ke Wang (Master Thesis, 2016), now: PhD Student at Imperial College London

- Andrea Bajcsy (Internship, 2016), now: PhD student at UC Berkeley

- Alina Kloss (Master thesis, 2015), now: PhD student with us

- Felix Widmaier (Master thesis, 2015)

- Thibault Portigliatti (Internship 2015), now: Student at ENSTA Paris Tech

- Alonso Marco Valle (Master thesis, 2015), now: PhD student at AMD

- Holger Kaden (Diploma thesis, 2014)

- Sophie Laturnus (Bachelor thesis, 2014)

- Claudia Pfreundt (Bachelor thesis, 2014)

2020

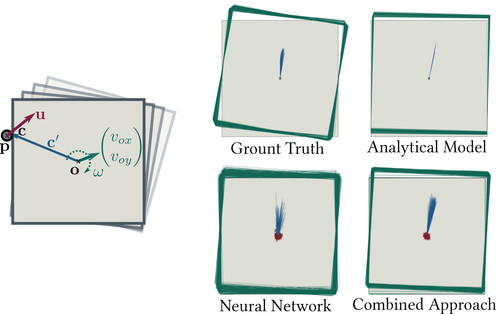

Kloss, A., Schaal, S., Bohg, J.

Combining learned and analytical models for predicting action effects from sensory data

International Journal of Robotics Research, September 2020 (article)

2019

Merzic, H., Bogdanovic, M., Kappler, D., Righetti, L., Bohg, J.

Leveraging Contact Forces for Learning to Grasp

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) 2019, IEEE, International Conference on Robotics and Automation, May 2019 (inproceedings)

2018

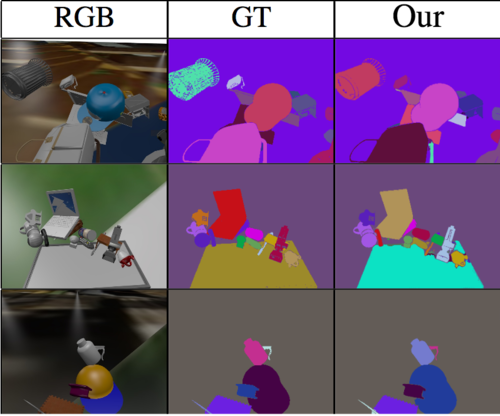

Shao, L., Tian, Y., Bohg, J.

ClusterNet: Instance Segmentation in RGB-D Images

arXiv, September 2018, Submitted to ICRA'19 (article) Submitted

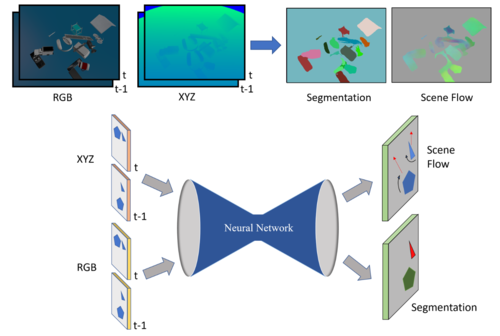

Shao, L., Shah, P., Dwaracherla, V., Bohg, J.

Motion-based Object Segmentation based on Dense RGB-D Scene Flow

IEEE Robotics and Automation Letters, 3(4):3797-3804, IEEE, IEEE/RSJ International Conference on Intelligent Robots and Systems, October 2018 (conference)

Kappler, D., Meier, F., Issac, J., Mainprice, J., Garcia Cifuentes, C., Wüthrich, M., Berenz, V., Schaal, S., Ratliff, N., Bohg, J.

Real-time Perception meets Reactive Motion Generation

IEEE Robotics and Automation Letters, 3(3):1864-1871, July 2018 (article)

2017

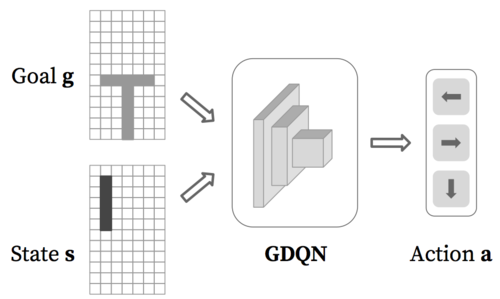

Li, W., Bohg, J., Fritz, M.

Acquiring Target Stacking Skills by Goal-Parameterized Deep Reinforcement Learning

arXiv, November 2017 (article) Submitted

Rubert, C., Kappler, D., Morales, A., Schaal, S., Bohg, J.

On the relevance of grasp metrics for predicting grasp success

In Proceedings of the IEEE/RSJ International Conference of Intelligent Robots and Systems, September 2017 (inproceedings) Accepted

Garcia Cifuentes, C., Issac, J., Wüthrich, M., Schaal, S., Bohg, J.

Probabilistic Articulated Real-Time Tracking for Robot Manipulation

IEEE Robotics and Automation Letters (RA-L), 2(2):577-584, April 2017 (article)

Bohg, J., Hausman, K., Sankaran, B., Brock, O., Kragic, D., Schaal, S., Sukhatme, G.

Interactive Perception: Leveraging Action in Perception and Perception in Action

IEEE Transactions on Robotics, 33, pages: 1273-1291, December 2017 (article)

2016

Bohg, J., Kappler, D., Meier, F., Ratliff, N., Mainprice, J., Issac, J., Wüthrich, M., Garcia Cifuentes, C., Berenz, V., Schaal, S.

Interlocking Perception-Action Loops at Multiple Time Scales - A System Proposal for Manipulation in Uncertain and Dynamic Environments

In International Workshop on Robotics in the 21st century: Challenges and Promises, September 2016 (inproceedings)

Kloss, A., Kappler, D., Lensch, H. P. A., Butz, M. V., Schaal, S., Bohg, J.

Learning Where to Search Using Visual Attention

Proceedings of the IEEE/RSJ Conference on Intelligent Robots and Systems, IEEE, IROS, October 2016 (conference)

Dominey, P. F., Prescott, T. J., Bohg, J., Engel, A. K., Gallagher, S., Heed, T., Hoffmann, M., Knoblich, G., Prinz, W., Schwartz, A.

Implications of Action-Oriented Paradigm Shifts in Cognitive Science

In The Pragmatic Turn - Toward Action-Oriented Views in Cognitive Science, 18, pages: 333-356, 20, Strüngmann Forum Reports, vol. 18, J. Lupp, series editor, (Editors: Andreas K. Engel and Karl J. Friston and Danica Kragic), The MIT Press, 18th Ernst Strüngmann Forum, May 2016 (incollection) In press

Bohg, J., Kragic, D.

Learning Action-Perception Cycles in Robotics: A Question of Representations and Embodiment

In The Pragmatic Turn - Toward Action-Oriented Views in Cognitive Science, 18, pages: 309-320, 18, Strüngmann Forum Reports, vol. 18, J. Lupp, series editor, (Editors: Andreas K. Engel and Karl J. Friston and Danica Kragic), The MIT Press, 18th Ernst Strüngmann Forum, May 2016 (incollection) In press

Widmaier, F., Kappler, D., Schaal, S., Bohg, J.

Robot Arm Pose Estimation by Pixel-wise Regression of Joint Angles

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) 2016, IEEE, IEEE International Conference on Robotics and Automation, May 2016 (inproceedings)

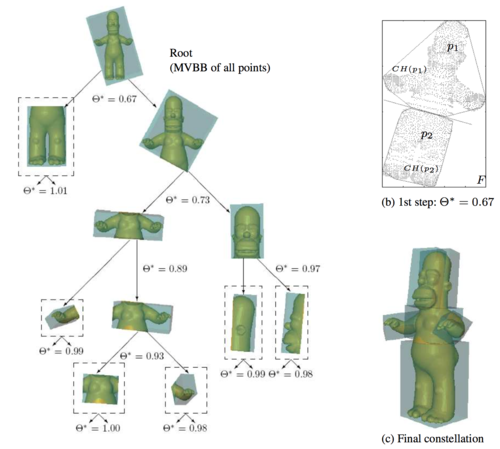

Kappler, D., Schaal, S., Bohg, J.

Optimizing for what matters: the Top Grasp Hypothesis

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) 2016, IEEE, IEEE International Conference on Robotics and Automation, May 2016 (inproceedings)

Bohg, J., Kappler, D., Schaal, S.

Exemplar-based Prediction of Object Properties from Local Shape Similarity

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) 2016, IEEE, IEEE International Conference on Robotics and Automation, May 2016 (inproceedings)

Issac, J., Wüthrich, M., Garcia Cifuentes, C., Bohg, J., Trimpe, S., Schaal, S.

Depth-based Object Tracking Using a Robust Gaussian Filter

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) 2016, IEEE, IEEE International Conference on Robotics and Automation, May 2016 (inproceedings)

Marco, A., Hennig, P., Bohg, J., Schaal, S., Trimpe, S.

Automatic LQR Tuning Based on Gaussian Process Global Optimization

In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pages: 270-277, IEEE, IEEE International Conference on Robotics and Automation, May 2016 (inproceedings)

Wüthrich, M., Garcia Cifuentes, C., Trimpe, S., Meier, F., Bohg, J., Issac, J., Schaal, S.

Robust Gaussian Filtering using a Pseudo Measurement

In Proceedings of the American Control Conference (ACC), Boston, MA, USA, July 2016 (inproceedings)

2015

Widmaier, F.

Robot Arm Tracking with Random Decision Forests

Eberhard-Karls-Universität Tübingen, May 2015 (mastersthesis)

Marco, A., Hennig, P., Bohg, J., Schaal, S., Trimpe, S.

Automatic LQR Tuning Based on Gaussian Process Optimization: Early Experimental Results

Machine Learning in Planning and Control of Robot Motion Workshop at the IEEE/RSJ International Conference on Intelligent Robots and Systems (iROS), pages: , , Machine Learning in Planning and Control of Robot Motion Workshop, October 2015 (conference)

Doerr, A., Ratliff, N., Bohg, J., Toussaint, M., Schaal, S.

Direct Loss Minimization Inverse Optimal Control

In Proceedings of Robotics: Science and Systems, Rome, Italy, Robotics: Science and Systems XI, July 2015 (inproceedings)

Kappler, D., Bohg, B., Schaal, S.

Leveraging Big Data for Grasp Planning

In Proceedings of the IEEE International Conference on Robotics and Automation, May 2015 (inproceedings)

Sankaran, B., Bohg, J., Ratliff, N., Schaal, S.

Policy Learning with Hypothesis Based Local Action Selection

In Reinforcement Learning and Decision Making, 2015 (inproceedings)

Wüthrich, M., Bohg, J., Kappler, D., Pfreundt, C., Schaal, S.

The Coordinate Particle Filter - A novel Particle Filter for High Dimensional Systems

In Proceedings of the IEEE International Conference on Robotics and Automation, May 2015 (inproceedings)

2014

Bohg, J., Romero, J., Herzog, A., Schaal, S.

Robot Arm Pose Estimation through Pixel-Wise Part Classification

In IEEE International Conference on Robotics and Automation (ICRA) 2014, pages: 3143-3150, IEEE International Conference on Robotics and Automation (ICRA), June 2014 (inproceedings)

Bohg, J., Morales, A., Asfour, T., Kragic, D.

Data-Driven Grasp Synthesis - A Survey

IEEE Transactions on Robotics, 30, pages: 289 - 309, IEEE, April 2014 (article)

2013

Ilonen, J., Bohg, J., Kyrki, V.

Fusing visual and tactile sensing for 3-D object reconstruction while grasping

In IEEE International Conference on Robotics and Automation (ICRA), pages: 3547-3554, 2013 (inproceedings)

Wüthrich, M., Pastor, P., Kalakrishnan, M., Bohg, J., Schaal, S.

Probabilistic Object Tracking Using a Range Camera

In IEEE/RSJ International Conference on Intelligent Robots and Systems, pages: 3195-3202, IEEE, November 2013 (inproceedings)

Illonen, J., Bohg, J., Kyrki, V.

3-D Object Reconstruction of Symmetric Objects by Fusing Visual and Tactile Sensing

The International Journal of Robotics Research, 33(2):321-341, Sage, October 2013 (article)

2012

Bohg, Jeannette, Welke, Kai, León, Beatriz, Do, Martin, Song, Dan, Wohlkinger, Walter, Aldoma, Aitor, Madry, Marianna, Przybylski, Markus, Asfour, Tamim, Marti, Higinio, Kragic, Danica, Morales, Antonio, Vincze, Markus

Task-Based Grasp Adaptation on a Humanoid Robot

In 10th IFAC Symposium on Robot Control, SyRoCo 2012, Dubrovnik, Croatia, September 5-7, 2012., pages: 779-786, 2012 (inproceedings)

Gratal, X., Romero, J., Bohg, J., Kragic, D.

Visual Servoing on Unknown Objects

Mechatronics, 22(4):423-435, Elsevier, June 2012, Visual Servoing \{SI\} (article)

2011

Bohg, J.

Multi-Modal Scene Understanding for Robotic Grasping

(2011:17):vi, 194, Trita-CSC-A, KTH Royal Institute of Technology, KTH, Computer Vision and Active Perception, CVAP, Centre for Autonomous Systems, CAS, KTH, Centre for Autonomous Systems, CAS, December 2011 (phdthesis)

Bohg, J., Johnson-Roberson, M., Leon, B., Felip, J., Gratal, X., Bergstrom, N., Kragic, D., Morales, A.

Mind the gap - robotic grasping under incomplete observation

In Robotics and Automation (ICRA), 2011 IEEE International Conference on, pages: 686-693, May 2011 (inproceedings)

Johnson-Roberson, M., Bohg, J., Skantze, G., Gustafson, J., Carlson, R., Rasolzadeh, B., Kragic, D.

Enhanced visual scene understanding through human-robot dialog

In Intelligent Robots and Systems (IROS), 2011 IEEE/RSJ International Conference on, pages: 3342-3348, 2011 (inproceedings)

2010

Johnson-Roberson, M., Bohg, J., Kragic, D., Skantze, G., Gustafson, J., Carlson, R.

Enhanced Visual Scene Understanding through Human-Robot Dialog

In Proceedings of AAAI 2010 Fall Symposium: Dialog with Robots, November 2010 (inproceedings)

Gratal, X., Bohg, J., Björkman, M., Kragic, D.

Scene Representation and Object Grasping Using Active Vision

In IROS’10 Workshop on Defining and Solving Realistic Perception Problems in Personal Robotics, October 2010 (inproceedings)

Bohg, J., Kragic, D.

Learning Grasping Points with Shape Context

Robotics and Autonomous Systems, 58(4):362-377, North-Holland Publishing Co., Amsterdam, The Netherlands, The Netherlands, April 2010 (article)

Johnson-Roberson, M., Bohg, J., Björkman, M., Kragic, D.

Attention-based active 3D point cloud segmentation

In Intelligent Robots and Systems (IROS), 2010 IEEE/RSJ International Conference on, pages: 1165-1170, October 2010 (inproceedings)

Bohg, J., Johnson-Roberson, M., Björkman, M., Kragic, D.

Strategies for multi-modal scene exploration

In Intelligent Robots and Systems (IROS), 2010 IEEE/RSJ International Conference on, pages: 4509-4515, October 2010 (inproceedings)

2009

Bohg, J., Barck-Holst, C., Huebner, K., Ralph, M., Rasolzadeh, B., Song, D., Kragic, D.

Towards Grasp-Oriented Visual Perception of Humanoid Robots

International Journal of Humanoid Robotics, 06(03):387-434, 2009 (article)

Bohg, J., Kragic, D.

Grasping familiar objects using shape context

In Advanced Robotics, 2009. ICAR 2009. International Conference on, pages: 1-6, 2009 (inproceedings)

Bergström, N., Bohg, J., Kragic, D.

Integration of Visual Cues for Robotic Grasping

In Computer Vision Systems, 5815, pages: 245-254, Lecture Notes in Computer Science, Springer Berlin Heidelberg, 2009 (incollection)