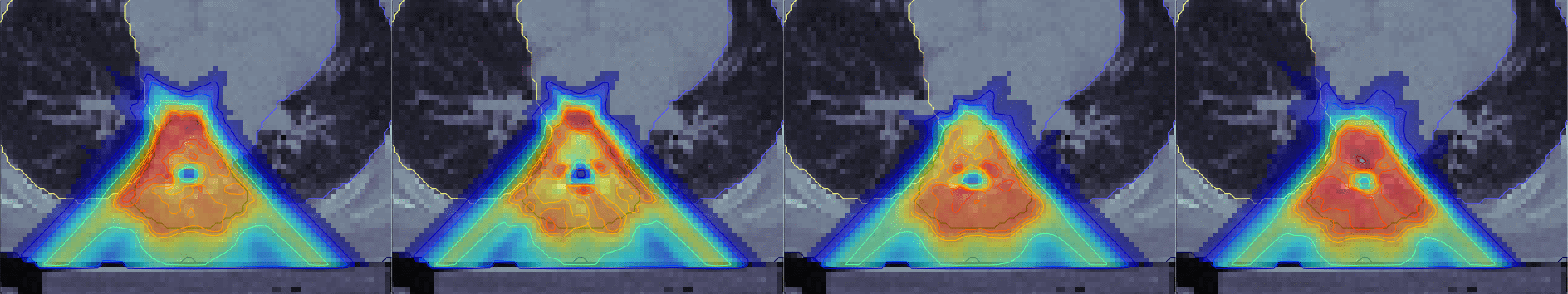

Several hypotheses for the dosis deposited in a spinal tumor under a traditional treatment plan. The samples are representative of an entire population simultaneously considered and optimized for reduced variance by the novel algorithm produced in this project.

Fast Probabilistic Treatment Planning for Radiation Tumor Therapy

Software solutions for scientific and technical tasks usually do not consist of a single computational step, but rather are a pipeline of computations. An ongoing collaboration between the research group on probabilistic numerics and the Optimization Group at the German Cancer Research Centre in Heidelberg has offered an opportunity for us to test mathematical ideas for the propagation of uncertainty through such chains of computation while also producing tangible medical results.

The semi-automated production protocol of treatment plans is an everyday occurence in clinical practice: Tumor patients who are scheduled for radiation treatment by their oncologist come in for an imaging session in a CT or MRI scanner. The resulting 3D image is annotated by a physician, outlining both tumor tissue and surrounding organs at risk of unwanted radiation damage. This volumetric data provides the input to an optimization algorithm, which sets the parameters of a treatment system (angles, energies, and shape of treatment beams). In advanced treatment systems, in particular those using heavy ions as the treatment probe, this optimization problem regular has several thousand parameters. Then the patient comes in for a sequence of treatments.

This entire process is subject to a host of sources of uncertainty, from the imaging process, through human labeling, the optimization algorithm itself, the mechanical imperfections of patient placement during treatment, to complicated and correlated physical and biological sources of uncertainty about the reaction of each cell to the delivered radiation dose.

In a number of sub-projects with our collaborators in Heidelberg, we were able to construct a framework for the analytical and numerically efficient computation and propagation of such input uncertainties [ ], that can separate the effects of various sources of uncertainty [ ], including highly nonlinear biological effects [ ] and efficiently propagate them through the optimization process [ ], to produce an improved treatment plan that is more robust to errors and reduces the risk of complications for patients. From the point of view of research in probabilistic numerics, this strand of work provides examples both for the feasibility and concrete useability of uncertainty propagation in compartmental computations: By casting each step of the pipeline in terms of structured Gaussian distributions, uncertainty from various sources can be tracked, monitored, and controlled at feasible computational overhead. There is a deeper philosophical inside hidden inside of these practical tools: Because probability theory does not differentiate between different types of uncertainty but captures everything the the universal language of probability measures, there is fundamental reason to distinguish between numerical, physical, experimental or philosophic uncertainty in computational practice, either. The kinds of uncertainty caused by finite data, by finite computational budget, imperfect physical measurements and even quantum-mechanical aspects of the interaction between accelerated heavy ions and DNA molecules all fit into one joint Gaussian distribution.