Integration of Visual Cues for Robotic Grasping

2009

Book Chapter

am

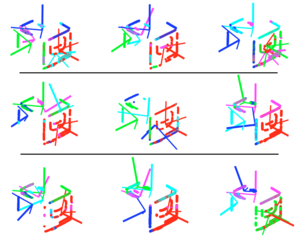

In this paper, we propose a method that generates grasping actions for novel objects based on visual input from a stereo camera. We are integrating two methods that are advantageous either in predicting how to grasp an object or where to apply a grasp. The first one reconstructs a wire frame object model through curve matching. Elementary grasping actions can be associated to parts of this model. The second method predicts grasping points in a 2D contour image of an object. By integrating the information from the two approaches, we can generate a sparse set of full grasp configurations that are of a good quality. We demonstrate our approach integrated in a vision system for complex shaped objects as well as in cluttered scenes.

| Author(s): | Bergström, Niklas and Bohg, Jeannette and Kragic, Danica |

| Book Title: | Computer Vision Systems |

| Volume: | 5815 |

| Pages: | 245-254 |

| Year: | 2009 |

| Series: | Lecture Notes in Computer Science |

| Publisher: | Springer Berlin Heidelberg |

| Department(s): | Autonomous Motion |

| Bibtex Type: | Book Chapter (incollection) |

| Paper Type: | Conference |

| DOI: | 10.1007/978-3-642-04667-4_25 |

| Language: | English |

| URL: | http://dx.doi.org/10.1007/978-3-642-04667-4_25 |

| Attachments: |

pdf

|

|

BibTex @incollection{Niklas2009,

title = {Integration of Visual Cues for Robotic Grasping},

author = {Bergstr{\"o}m, Niklas and Bohg, Jeannette and Kragic, Danica},

booktitle = {Computer Vision Systems},

volume = {5815},

pages = {245-254},

series = {Lecture Notes in Computer Science},

publisher = {Springer Berlin Heidelberg},

year = {2009},

doi = {10.1007/978-3-642-04667-4_25},

url = {http://dx.doi.org/10.1007/978-3-642-04667-4_25}

}

|

|