Common Cartesian control methodologies are warped to bend around obstacles using an optimization over behavior up to three seconds in the future. These non-linear constrained optimization problems can be solved in 0.7 seconds by exploiting problem structure.

Motion generation is increasingly formalized as a large scale optimization over future outcomes of actions. For high dimensional manipulation platforms, this optimization is computationally so difficult that for a long time traditional approaches focused primarily on feasibility of the solution rather than even local optimality. Recent efforts, though, to understand this optimization problem holistically and to exploit structure in the problem to enhance computational efficiency, are starting to pay off. We now have very fast constrained optimizers that leverage second order information to enhance convergence and leverage problem geometry much more effectively than traditional planners. At the Autonomous Motion Department, we are exploring this space from many directions.

One of our core efforts centers around formalizing the role of Riemannian Geometry in representing how obstacles shape the geometry of their surroundings and how those models integrate efficiently into the optimization [ ]. Our optimizers account for environmental constraints as well as behavioral terms and geometric models of the robot's surroundings while maintaining the computational efficiency needed to animate fast responsive and reactive behaviors on Apollo.

Additionally, since robots can only retrieve information from the environment through limited sensors that must be processed online, no internal model of the environment will ever be sufficiently precise to act blindly. We study how our motion system can leverage the intrinsic robustness of contact controllers by explicitly optimizing a profile of desired contacts designed to reduce uncertainty [ ]. In the same way we might reach out to touch a table before picking up a glass in the dark, Apollo can localize himself efficiently through planned contact by reasoning about the trade off between uncertainty reduction and task success.

Our ongoing efforts are shaping Apollo into a friendly manipulation platform with natural unimposing motion and efficient reactive behavior.

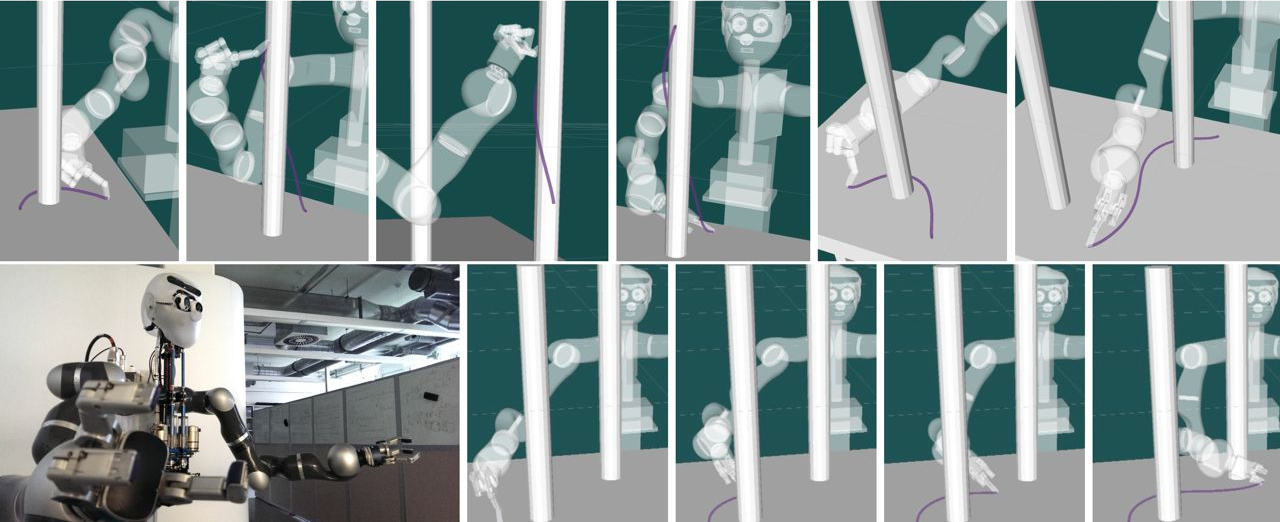

RieMO running as the core optimization process of a continuously optimizing control system for Apollo demonstrating movement agility and robustness to perturbations

All motions are generated on the fly by optimization (RieMO) running in real time. Uses force feedback during grasp measured by the Barrett hand's strain gauges.

Riemannian Motion Optimization (RieMO): Simulation demonstrations of optimized motions around, and in contact with, obstacles under different workspace metrics we've studied. Each of these motions took around .7 seconds to optimize from a naïve zero motion trajectory using code designed for usability and correctness over speed.

Motion Demos: Basic motion demonstrations on the physical Apollo platform. Each motion segment between zero velocity states is optimized using the RieMO framework. The motions use various combinations of approximate energy measures, workspace and configuration space velocity penalties, bias configuration redundancy resolution penalties, workspace motion biasing potentials (e.g. grasp and lift potentials for manipulation), joint limit penalties and constraints, environmental constraints (obs avoidance), end-effector orientation constraints, and motion target constraints.

Dual Execution: Handling modeling uncertainty formally in motion generation is computationally intractable. However, empirically we've seen that contact controllers can be surprisingly robust to uncertainty and perturbations on their own. This framework adds potentials to the motion optimizer to design motion that explicitly reduces uncertainty through contacts. Information about those desired contacts is communicated to lower level contact controllers to produce planned behavior that explicitly leverages the inherent robustness of these controllers to fight unavoidable uncertainties in the models.