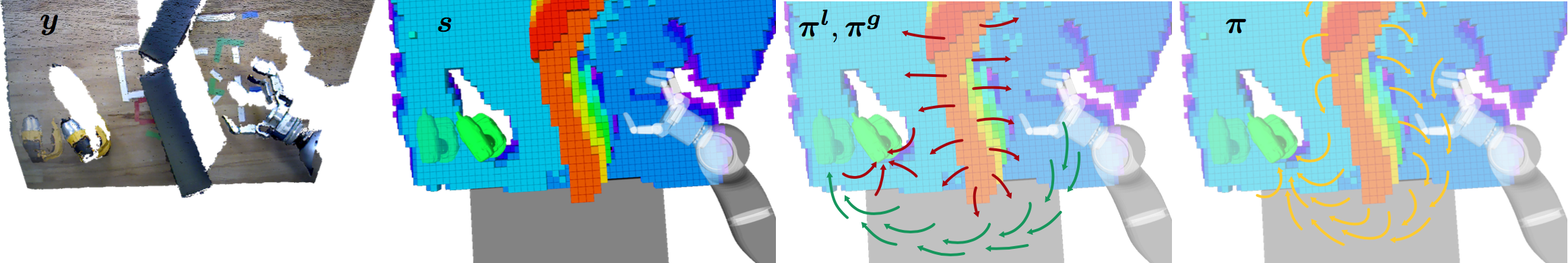

Illustration of one time step in our integrated robotic manipulation system. From left to right: sensory input y (we overlay the position of target object at an earlier time step), perceived state s of the robot and the environment (target object, obstacles), local (red) and global policies (green), and fused policy (yellow).

We address the challenging problem of robotic grasping and manipulation in the presence of uncertainty. This uncertainty is due to noisy sensing, inaccurate models and hard-to-predict environment dynamics. Our approach emphasizes the importance of continuous, real-time perception and its tight integration with reactive motion generation methods. We present a fully integrated system where real-time object and robot tracking as well as ambient world modeling provides the necessary input to feedback controllers and continuous motion optimizers. Specifically, they provide attractive and repulsive potentials based on which the controllers and motion optimizer can online compute movement policies at different time intervals. We extensively evaluate the proposed system on a real robotic platform in four scenarios that exhibit either challenging workspace geometry or a dynamic environment. We compare the proposed integrated system with a more traditional sense-plan-act approach that is still widely used. In 333 experiments, we show the robustness and accuracy of the proposed system.